Language

Python, Jupyter Notebook

Tool Type

Algorithm

License

Creative Commons Attribution 4.0

Version

N/D

SignLLM Research Collective

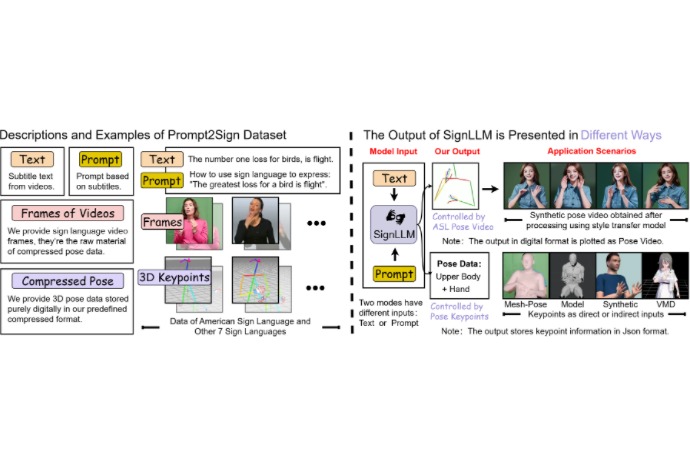

SignLLM is a language model designed for multilingual sign language production. It offers two modes, MLSF and Prompt2LangGloss, that generate sign language gestures from text and questions. It uses a reinforcement learning approach to improve the quality of training and data generation. For its development, SignLLM employs Prompt2Sign, a multilingual dataset that includes American Sign Language and seven others. This dataset standardizes information by extracting poses from videos in a unified format, achieving outstanding performance in sign language tasks.

SignLLM solves the problem of generating gestures in the language of letters from consultation texts and questions, facilitating inclusive communication. Use a large multilingual language model and a new learning approach for strength to improve the quality and efficiency of training in other languages.

SignLLM is a language model designed for multilingual sign language production. It works using two modes, MLSF and Prompt2LangGloss, which generate gestures from text and questions. It uses reinforcement learning to improve training, supported by the Prompt2Sign dataset, which standardizes pose information from videos in eight sign languages.

SignLLM solves the problem of generating sign language gestures from query texts and questions, facilitating inclusive communication. It uses a multilingual large language model and a novel reinforcement learning approach to improve training quality and efficiency in eight sign languages.

Connect with the Development Code team and discover how our carefully curated open source tools can support your institution in Latin America and the Caribbean. Contact us to explore solutions, resolve implementation issues, share reuse successes or present a new tool. Write to [email protected]

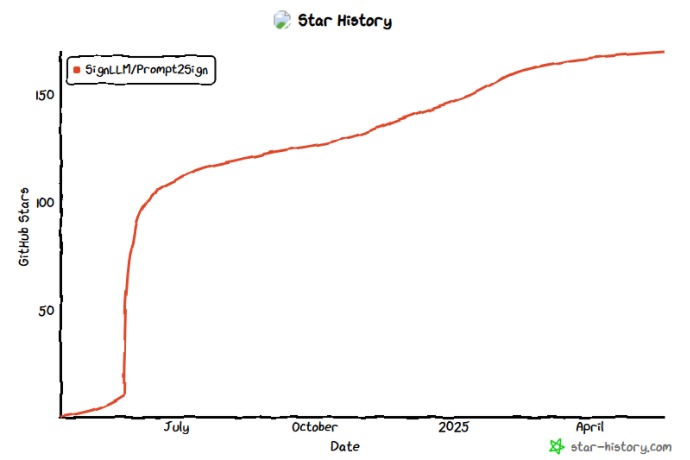

SignLLM has gained over 160 GitHub stars since launch, showing strong interest in multilingual sign language generation.

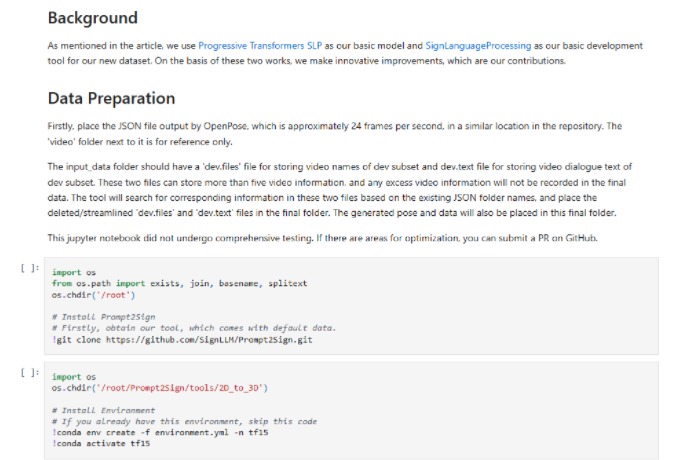

This notebook walks through data preparation from OpenPose JSON files, cloning the Prompt2Sign repo, and setting up the environment to generate poses from sign language videos.

Prompt2Sign includes text, prompts, video frames, and compressed 3D poses across 8 sign languages. SignLLM generates digital pose data from text or prompts, outputting synthetic videos or 3D models.

Project description, demo and materials.

Technical article on the Prompt2Sign approach.

Demonstration of the sign generating model.